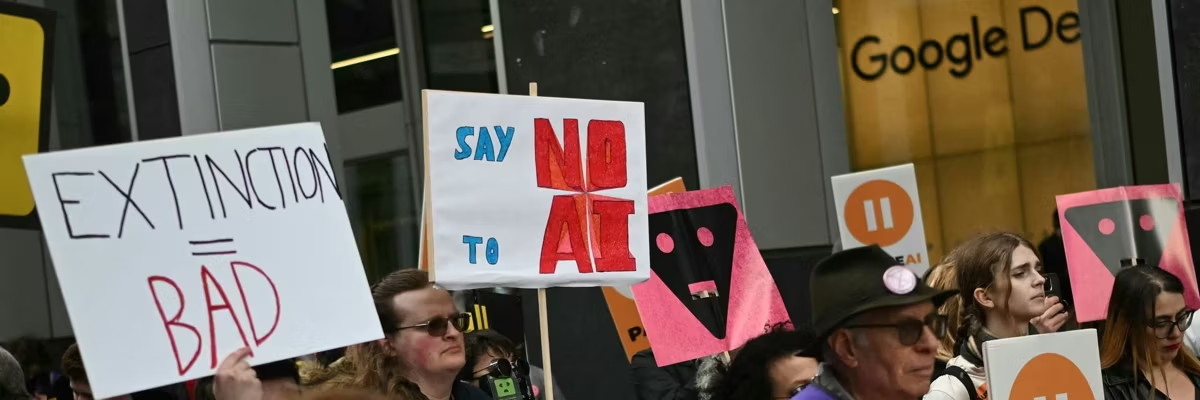

Protesters gather with banners and placards outside the offices of Google DeepMind in London during a February 28, 2026 protest organized by PauseAI UK and other groups.

600+ Workers Protest as Google Signs $200 Million Secret Pentagon AI Warfare Deal

“Human lives are already being lost and civil liberties put at risk at home and abroad from misuses of the technology we’re playing a key role in building."

As Google on Monday became the latest player in the artificial intelligence arms race to sign a classified deal with the US Department of Defense, hundreds of workers at the Silicon Valley giant demanded that its CEO prevent the Pentagon from using the company's AI models for covert work.

Reuters reported that the $200 million agreement includes safety filters and allows the Pentagon to use Google's AI "for any lawful purpose" but not for the development of lethal autonomous weapons systems—commonly known as "killer robots"—or domestic surveillance without human oversight and control.

According to The Information's Erin Woo, the deal does not give Google “any right to control or veto lawful government operational decision-making."

The agreement also reportedly requires Google to adjust its AI safety settings at the government's request.

“We are proud to be part of a broad consortium of leading AI labs and technology and cloud companies providing AI services and infrastructure in support of national security,” a Google spokesperson told The Information.

More than 600 Google employees—many of them from the company's DeepMind AI laboratory—sent a letter Monday to CEO Sundar Pichai demanding that he block the US military from using the firm's artificial intelligence technology for classified projects.

“We want to see AI benefit humanity; not to see it being used in inhumane or extremely harmful ways," the letter says, according to The Washington Post. "This includes lethal autonomous weapons and mass surveillance but extends beyond."

“The only way to guarantee that Google does not become associated with such harms is to reject any classified workloads," the workers stressed. "Otherwise, such uses may occur without our knowledge or the power to stop them."

Thousands of AI experts have called for a pause on the development and deployment of advanced AI technology. However, tech companies and military officials have argued—much as the military-industrial complex did with nuclear weapons during the Cold War—that if the US does not pursue advanced AI, rivals like China will, leaving the US irrecoverably behind.

As US and allied forces from Israel to Ukraine use AI to make life-and-death wartime decisions—including selecting attack targets at a rate unfathomable just a few years ago—use of such technology is expediting Israel's massacres in Gaza and Lebanon and US-Israeli killings in Iran.

“Human lives are already being lost and civil liberties put at risk at home and abroad from misuses of the technology we’re playing a key role in building,” the Google workers' letter states.

The policies and actions of the humans in charge of the US government and military have also stoked fears about their use of AI.

US Defense Secretary Pete Hegseth, for example, has overseen the dismantling of initiatives aimed at reducing wartime harm to civilians—hundreds of thousands of whom have been killed in US-led wars during this century, according to experts. Hegseth has instead promoted "maximum lethality" for US forces while expressing disdain for what he called "stupid rules of engagement" designed to minimize civilian harm.

Critics say their concerns have been validated by actions including the US cruise missile strike on a girls' school in Iran that killed 168 children and staff and Israeli airstrikes, many of them using US-supplied bombs, that have killed tens of thousands of Palestinian civilians in Gaza.

Companies that have run afoul of the Trump administration for refusing military AI use requests also risk getting left behind. Anthropic—maker of the AI assistant Claude—lost a $200 million Pentagon contract and is facing a government blacklist and legal battles after the company refused to loosen safety restrictions on autonomous weapons and surveillance.

Meanwhile, OpenAI, which makes the generative AI platform ChatGPT, rewrote its "no military use" policy to allow "national security" applications of its products, opening the door to lucrative Pentagon contracts.

Not wanting to get left behind as President Donald Trump returned to office last year, Google quietly pulled back its commitment to not use artificial intelligence for harmful purposes, marking a stark departure from the company's long-standing founding motto of "Don't be Evil," which it ditched in 2018.

Pentagon contracts followed, and Google reportedly hopes to add $6 billion in AI deals by next year.

Most AI experts agree that it's not a matter of if, but when, artificial intelligence surpasses human capabilities. Experts are increasingly viewing AI as a new emerging species, and prominent industry voices—including philosopher Nick Bostrom, Machine Intelligence Research Institute co-founder Eliezer Yudkowsky, and "Godfather of AI" Geoffrey Hinton—have noted that when a more intelligent species' goals conflict with those of a less intelligent one, the less intelligent species tends to lose, and usually catastrophically.

Hinton is so concerned that he quit Google in 2023 so he could speak openly about the remote but growing risk of AI one day wiping out humanity.

The perceived probability of existentially catastrophic outcomes from AI—known as p(doom)—was once the stuff of jokes. Now, AI experts' p(doom) predictions are watched like weather or market forecasts. Yudkowski has said there's a greater than 95% chance of AI-driven catastrophe.

Hinton—who was awarded the 2024 Nobel Prize in physics for his work on the neural networks, the foundational technology behind AI—is relatively more optimistic, putting the odds at 10-20%.

"There are very few examples of more intelligent things being controlled by less intelligent things," he said after winning the Nobel Prize.

Urgent. It's never been this bad.

Dear Common Dreams reader, It’s been nearly 30 years since I co-founded Common Dreams with my late wife, Lina Newhouser. We had the radical notion that journalism should serve the public good, not corporate profits. It was clear to us from the outset what it would take to build such a project. No paid advertisements. No corporate sponsors. No millionaire publisher telling us what to think or do. Many people said we wouldn't last a year, but we proved those doubters wrong. Together with a tremendous team of journalists and dedicated staff, we built an independent media outlet free from the constraints of profits and corporate control. Our mission from the outset was simple. To inform. To inspire. To ignite change for the common good. Building Common Dreams was not easy. Our survival was never guaranteed. When you take on the most powerful forces—Wall Street greed, fossil fuel industry destruction, Big Tech lobbyists, and uber-rich oligarchs who have spent billions upon billions rigging the economy and democracy in their favor—the only bulwark you have is supporters who believe in your work. But here’s the urgent message from me today. It’s never been this bad out there. And it’s never been this hard to keep us going. At the very moment Common Dreams is most needed and doing some of its best and most important work, the threats we face are intensifying. Right now, with just four days to go in our Spring Campaign, we are not even halfway to our goal. When everyone does the little they can afford, we are strong. But if that support retreats or dries up, so do we. Can you make a gift right now to make sure Common Dreams not only survives but thrives? There is no backup plan or rainy day fund. There is only you. —Craig Brown, Co-founder |

As Google on Monday became the latest player in the artificial intelligence arms race to sign a classified deal with the US Department of Defense, hundreds of workers at the Silicon Valley giant demanded that its CEO prevent the Pentagon from using the company's AI models for covert work.

Reuters reported that the $200 million agreement includes safety filters and allows the Pentagon to use Google's AI "for any lawful purpose" but not for the development of lethal autonomous weapons systems—commonly known as "killer robots"—or domestic surveillance without human oversight and control.

According to The Information's Erin Woo, the deal does not give Google “any right to control or veto lawful government operational decision-making."

The agreement also reportedly requires Google to adjust its AI safety settings at the government's request.

“We are proud to be part of a broad consortium of leading AI labs and technology and cloud companies providing AI services and infrastructure in support of national security,” a Google spokesperson told The Information.

More than 600 Google employees—many of them from the company's DeepMind AI laboratory—sent a letter Monday to CEO Sundar Pichai demanding that he block the US military from using the firm's artificial intelligence technology for classified projects.

“We want to see AI benefit humanity; not to see it being used in inhumane or extremely harmful ways," the letter says, according to The Washington Post. "This includes lethal autonomous weapons and mass surveillance but extends beyond."

“The only way to guarantee that Google does not become associated with such harms is to reject any classified workloads," the workers stressed. "Otherwise, such uses may occur without our knowledge or the power to stop them."

Thousands of AI experts have called for a pause on the development and deployment of advanced AI technology. However, tech companies and military officials have argued—much as the military-industrial complex did with nuclear weapons during the Cold War—that if the US does not pursue advanced AI, rivals like China will, leaving the US irrecoverably behind.

As US and allied forces from Israel to Ukraine use AI to make life-and-death wartime decisions—including selecting attack targets at a rate unfathomable just a few years ago—use of such technology is expediting Israel's massacres in Gaza and Lebanon and US-Israeli killings in Iran.

“Human lives are already being lost and civil liberties put at risk at home and abroad from misuses of the technology we’re playing a key role in building,” the Google workers' letter states.

The policies and actions of the humans in charge of the US government and military have also stoked fears about their use of AI.

US Defense Secretary Pete Hegseth, for example, has overseen the dismantling of initiatives aimed at reducing wartime harm to civilians—hundreds of thousands of whom have been killed in US-led wars during this century, according to experts. Hegseth has instead promoted "maximum lethality" for US forces while expressing disdain for what he called "stupid rules of engagement" designed to minimize civilian harm.

Critics say their concerns have been validated by actions including the US cruise missile strike on a girls' school in Iran that killed 168 children and staff and Israeli airstrikes, many of them using US-supplied bombs, that have killed tens of thousands of Palestinian civilians in Gaza.

Companies that have run afoul of the Trump administration for refusing military AI use requests also risk getting left behind. Anthropic—maker of the AI assistant Claude—lost a $200 million Pentagon contract and is facing a government blacklist and legal battles after the company refused to loosen safety restrictions on autonomous weapons and surveillance.

Meanwhile, OpenAI, which makes the generative AI platform ChatGPT, rewrote its "no military use" policy to allow "national security" applications of its products, opening the door to lucrative Pentagon contracts.

Not wanting to get left behind as President Donald Trump returned to office last year, Google quietly pulled back its commitment to not use artificial intelligence for harmful purposes, marking a stark departure from the company's long-standing founding motto of "Don't be Evil," which it ditched in 2018.

Pentagon contracts followed, and Google reportedly hopes to add $6 billion in AI deals by next year.

Most AI experts agree that it's not a matter of if, but when, artificial intelligence surpasses human capabilities. Experts are increasingly viewing AI as a new emerging species, and prominent industry voices—including philosopher Nick Bostrom, Machine Intelligence Research Institute co-founder Eliezer Yudkowsky, and "Godfather of AI" Geoffrey Hinton—have noted that when a more intelligent species' goals conflict with those of a less intelligent one, the less intelligent species tends to lose, and usually catastrophically.

Hinton is so concerned that he quit Google in 2023 so he could speak openly about the remote but growing risk of AI one day wiping out humanity.

The perceived probability of existentially catastrophic outcomes from AI—known as p(doom)—was once the stuff of jokes. Now, AI experts' p(doom) predictions are watched like weather or market forecasts. Yudkowski has said there's a greater than 95% chance of AI-driven catastrophe.

Hinton—who was awarded the 2024 Nobel Prize in physics for his work on the neural networks, the foundational technology behind AI—is relatively more optimistic, putting the odds at 10-20%.

"There are very few examples of more intelligent things being controlled by less intelligent things," he said after winning the Nobel Prize.

- Question for Hegseth: Did US Military Rely on AI Targeting for Bombing of Iranian School? ›

- Hegseth Demands Anthropic Let Military Use AI However It Wants—Even for Autonomous Killer Drones and Spying On Americans ›

- Hegseth Says Pentagon Project Will Put Artificial Intelligence 'Into the Hands of Every American Warrior' ›

- 'Corporate War Machine': With Trump in Office, Google Drops Pledge Not to Use AI to Develop Weapons ›

As Google on Monday became the latest player in the artificial intelligence arms race to sign a classified deal with the US Department of Defense, hundreds of workers at the Silicon Valley giant demanded that its CEO prevent the Pentagon from using the company's AI models for covert work.

Reuters reported that the $200 million agreement includes safety filters and allows the Pentagon to use Google's AI "for any lawful purpose" but not for the development of lethal autonomous weapons systems—commonly known as "killer robots"—or domestic surveillance without human oversight and control.

According to The Information's Erin Woo, the deal does not give Google “any right to control or veto lawful government operational decision-making."

The agreement also reportedly requires Google to adjust its AI safety settings at the government's request.

“We are proud to be part of a broad consortium of leading AI labs and technology and cloud companies providing AI services and infrastructure in support of national security,” a Google spokesperson told The Information.

More than 600 Google employees—many of them from the company's DeepMind AI laboratory—sent a letter Monday to CEO Sundar Pichai demanding that he block the US military from using the firm's artificial intelligence technology for classified projects.

“We want to see AI benefit humanity; not to see it being used in inhumane or extremely harmful ways," the letter says, according to The Washington Post. "This includes lethal autonomous weapons and mass surveillance but extends beyond."

“The only way to guarantee that Google does not become associated with such harms is to reject any classified workloads," the workers stressed. "Otherwise, such uses may occur without our knowledge or the power to stop them."

Thousands of AI experts have called for a pause on the development and deployment of advanced AI technology. However, tech companies and military officials have argued—much as the military-industrial complex did with nuclear weapons during the Cold War—that if the US does not pursue advanced AI, rivals like China will, leaving the US irrecoverably behind.

As US and allied forces from Israel to Ukraine use AI to make life-and-death wartime decisions—including selecting attack targets at a rate unfathomable just a few years ago—use of such technology is expediting Israel's massacres in Gaza and Lebanon and US-Israeli killings in Iran.

“Human lives are already being lost and civil liberties put at risk at home and abroad from misuses of the technology we’re playing a key role in building,” the Google workers' letter states.

The policies and actions of the humans in charge of the US government and military have also stoked fears about their use of AI.

US Defense Secretary Pete Hegseth, for example, has overseen the dismantling of initiatives aimed at reducing wartime harm to civilians—hundreds of thousands of whom have been killed in US-led wars during this century, according to experts. Hegseth has instead promoted "maximum lethality" for US forces while expressing disdain for what he called "stupid rules of engagement" designed to minimize civilian harm.

Critics say their concerns have been validated by actions including the US cruise missile strike on a girls' school in Iran that killed 168 children and staff and Israeli airstrikes, many of them using US-supplied bombs, that have killed tens of thousands of Palestinian civilians in Gaza.

Companies that have run afoul of the Trump administration for refusing military AI use requests also risk getting left behind. Anthropic—maker of the AI assistant Claude—lost a $200 million Pentagon contract and is facing a government blacklist and legal battles after the company refused to loosen safety restrictions on autonomous weapons and surveillance.

Meanwhile, OpenAI, which makes the generative AI platform ChatGPT, rewrote its "no military use" policy to allow "national security" applications of its products, opening the door to lucrative Pentagon contracts.

Not wanting to get left behind as President Donald Trump returned to office last year, Google quietly pulled back its commitment to not use artificial intelligence for harmful purposes, marking a stark departure from the company's long-standing founding motto of "Don't be Evil," which it ditched in 2018.

Pentagon contracts followed, and Google reportedly hopes to add $6 billion in AI deals by next year.

Most AI experts agree that it's not a matter of if, but when, artificial intelligence surpasses human capabilities. Experts are increasingly viewing AI as a new emerging species, and prominent industry voices—including philosopher Nick Bostrom, Machine Intelligence Research Institute co-founder Eliezer Yudkowsky, and "Godfather of AI" Geoffrey Hinton—have noted that when a more intelligent species' goals conflict with those of a less intelligent one, the less intelligent species tends to lose, and usually catastrophically.

Hinton is so concerned that he quit Google in 2023 so he could speak openly about the remote but growing risk of AI one day wiping out humanity.

The perceived probability of existentially catastrophic outcomes from AI—known as p(doom)—was once the stuff of jokes. Now, AI experts' p(doom) predictions are watched like weather or market forecasts. Yudkowski has said there's a greater than 95% chance of AI-driven catastrophe.

Hinton—who was awarded the 2024 Nobel Prize in physics for his work on the neural networks, the foundational technology behind AI—is relatively more optimistic, putting the odds at 10-20%.

"There are very few examples of more intelligent things being controlled by less intelligent things," he said after winning the Nobel Prize.

- Question for Hegseth: Did US Military Rely on AI Targeting for Bombing of Iranian School? ›

- Hegseth Demands Anthropic Let Military Use AI However It Wants—Even for Autonomous Killer Drones and Spying On Americans ›

- Hegseth Says Pentagon Project Will Put Artificial Intelligence 'Into the Hands of Every American Warrior' ›

- 'Corporate War Machine': With Trump in Office, Google Drops Pledge Not to Use AI to Develop Weapons ›