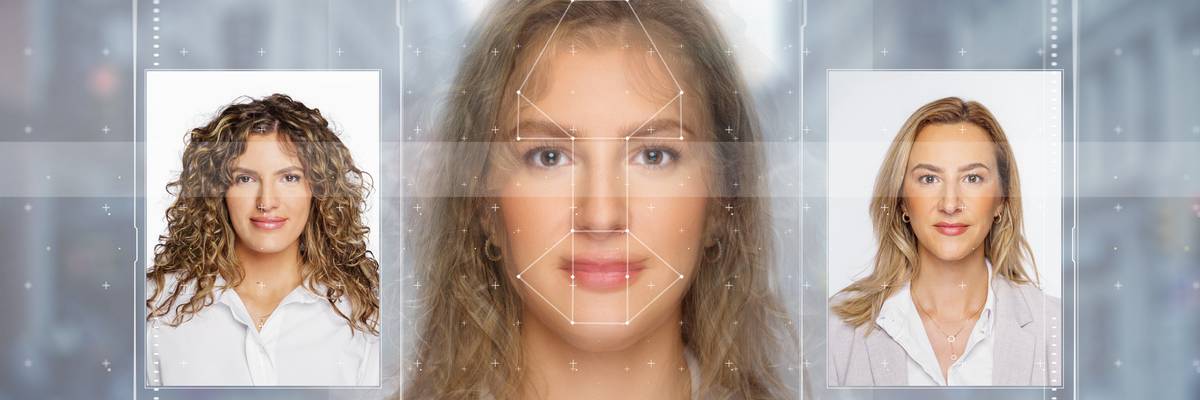

AI technology is seen changing the appearance of a woman.

Civil Society Groups Back FCC Effort to Confront Deceptive Deepfakes

"It's time for the FCC to protect voters from deepfakes," said one advocate.

A week after the Federal Elections Commission announced it would not take action to regulate artificial intelligence-generated "deepfakes" in political ads, more than 40 civil society groups on Thursday called on the Federal Communications Commission to step in to ensure U.S. voters will be informed about fake content used by campaigns as they prepare to go to the polls.

The groups, including Public Citizen, the AFL-CIO, Access Now, and the Campaign Legal Center, backed a proposal by the FCC to require on-air and written disclosures when there is AI-generated content in political ads.

"It's time for the FCC to protect voters from deepfakes!" said Willmary Escoto, policy counsel for Access Now.

Unveiled in May by FCC Chair Jessica Rosenworcel, the FCC's proposal would apply the disclosure rules to ads pertaining to candidates and issues and push for a "specific definition of AI-generated content."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements."

The civil society groups expressed their "strong support" for rules requiring "transparency in the use of AI-generated content in political advertisements on TV and radio, especially when the AI-generated content falsely depicts a candidate or persons saying or doing something that they never did with the intent to cause harm or deceive voters (known as 'deepfakes')."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements," wrote the groups.

Public Citizen condemned the Federal Election Commission last week when its Republican chair, Sean Cooksey, said the agency should "study how AI is actually used on the ground before considering any new rules."

The groups on Thursday said evidence already "abounds of the significant and deceptive impact that AI-generated content can have," with X owner Elon Musk recently posting a deepfake video that showed a manipulated image of Democratic presidential candidate and Vice President Kamala Harris, making it seem like she was saying she was the "ultimate diversity hire."

"The proposed disclosure requirements are a natural and common-sense extension of the FCC's existing mandates to ensure transparency in broadcasting in general and in political advertising on radio and TV in particular," said the groups.

They also commended the FCC's leadership in addressing the "critical issue" of deepfakes.

Urgent. It's never been this bad.

Dear Common Dreams reader, It’s been nearly 30 years since I co-founded Common Dreams with my late wife, Lina Newhouser. We had the radical notion that journalism should serve the public good, not corporate profits. It was clear to us from the outset what it would take to build such a project. No paid advertisements. No corporate sponsors. No millionaire publisher telling us what to think or do. Many people said we wouldn't last a year, but we proved those doubters wrong. Together with a tremendous team of journalists and dedicated staff, we built an independent media outlet free from the constraints of profits and corporate control. Our mission from the outset was simple. To inform. To inspire. To ignite change for the common good. Building Common Dreams was not easy. Our survival was never guaranteed. When you take on the most powerful forces—Wall Street greed, fossil fuel industry destruction, Big Tech lobbyists, and uber-rich oligarchs who have spent billions upon billions rigging the economy and democracy in their favor—the only bulwark you have is supporters who believe in your work. But here’s the urgent message from me today. It’s never been this bad out there. And it’s never been this hard to keep us going. At the very moment Common Dreams is most needed and doing some of its best and most important work, the threats we face are intensifying. Right now, with just four days to go in our Spring Campaign, we are not even halfway to our goal. When everyone does the little they can afford, we are strong. But if that support retreats or dries up, so do we. Can you make a gift right now to make sure Common Dreams not only survives but thrives? There is no backup plan or rainy day fund. There is only you. —Craig Brown, Co-founder |

A week after the Federal Elections Commission announced it would not take action to regulate artificial intelligence-generated "deepfakes" in political ads, more than 40 civil society groups on Thursday called on the Federal Communications Commission to step in to ensure U.S. voters will be informed about fake content used by campaigns as they prepare to go to the polls.

The groups, including Public Citizen, the AFL-CIO, Access Now, and the Campaign Legal Center, backed a proposal by the FCC to require on-air and written disclosures when there is AI-generated content in political ads.

"It's time for the FCC to protect voters from deepfakes!" said Willmary Escoto, policy counsel for Access Now.

Unveiled in May by FCC Chair Jessica Rosenworcel, the FCC's proposal would apply the disclosure rules to ads pertaining to candidates and issues and push for a "specific definition of AI-generated content."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements."

The civil society groups expressed their "strong support" for rules requiring "transparency in the use of AI-generated content in political advertisements on TV and radio, especially when the AI-generated content falsely depicts a candidate or persons saying or doing something that they never did with the intent to cause harm or deceive voters (known as 'deepfakes')."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements," wrote the groups.

Public Citizen condemned the Federal Election Commission last week when its Republican chair, Sean Cooksey, said the agency should "study how AI is actually used on the ground before considering any new rules."

The groups on Thursday said evidence already "abounds of the significant and deceptive impact that AI-generated content can have," with X owner Elon Musk recently posting a deepfake video that showed a manipulated image of Democratic presidential candidate and Vice President Kamala Harris, making it seem like she was saying she was the "ultimate diversity hire."

"The proposed disclosure requirements are a natural and common-sense extension of the FCC's existing mandates to ensure transparency in broadcasting in general and in political advertising on radio and TV in particular," said the groups.

They also commended the FCC's leadership in addressing the "critical issue" of deepfakes.

A week after the Federal Elections Commission announced it would not take action to regulate artificial intelligence-generated "deepfakes" in political ads, more than 40 civil society groups on Thursday called on the Federal Communications Commission to step in to ensure U.S. voters will be informed about fake content used by campaigns as they prepare to go to the polls.

The groups, including Public Citizen, the AFL-CIO, Access Now, and the Campaign Legal Center, backed a proposal by the FCC to require on-air and written disclosures when there is AI-generated content in political ads.

"It's time for the FCC to protect voters from deepfakes!" said Willmary Escoto, policy counsel for Access Now.

Unveiled in May by FCC Chair Jessica Rosenworcel, the FCC's proposal would apply the disclosure rules to ads pertaining to candidates and issues and push for a "specific definition of AI-generated content."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements."

The civil society groups expressed their "strong support" for rules requiring "transparency in the use of AI-generated content in political advertisements on TV and radio, especially when the AI-generated content falsely depicts a candidate or persons saying or doing something that they never did with the intent to cause harm or deceive voters (known as 'deepfakes')."

"These rules are essential to safeguard the integrity of our democratic processes and ensure that voters are fully informed of the origins of political advertisements," wrote the groups.

Public Citizen condemned the Federal Election Commission last week when its Republican chair, Sean Cooksey, said the agency should "study how AI is actually used on the ground before considering any new rules."

The groups on Thursday said evidence already "abounds of the significant and deceptive impact that AI-generated content can have," with X owner Elon Musk recently posting a deepfake video that showed a manipulated image of Democratic presidential candidate and Vice President Kamala Harris, making it seem like she was saying she was the "ultimate diversity hire."

"The proposed disclosure requirements are a natural and common-sense extension of the FCC's existing mandates to ensure transparency in broadcasting in general and in political advertising on radio and TV in particular," said the groups.

They also commended the FCC's leadership in addressing the "critical issue" of deepfakes.